You can fool all the people some of the time, and some of the people all the time, but you cannot fool all the people all the time.

Abraham Lincoln

“Around the world, government actors are using social media to manufacture consensus, automate suppression, and undermine trust in the liberal international order,” according to the report of Oxford Internet Institute and University of Oxford on manipulations and disinformation spread in social media in 2019. Computational propaganda – the use of algorithms, automation, and big data to shape public life – is becoming a pervasive and ubiquitous part of everyday life.

Social media has become co-opted by many authoritarian regimes. In 26 countries, computational propaganda is being used as a tool of information control in three distinct ways: to suppress fundamental human rights, discredit political opponents, and drown out dissenting opinions.

After analyzing 28 countries of the world, the Oxford University’s Computational Propaganda Research Project ascertained that almost all authoritarian regimes carried out information campaigns to influence their own populations in social media; some of them even tried to spread desirable messages outside the country as well. Unlike the authoritarian regimes, the campaign organized by the absolute majority of democratic countries mainly targets international audience; as a rule, political parties carry out social media campaigns targeting their own voters.

It is worth noting that according to global tendency, part of social media users involved in the campaigns run by government structures are mostly authentic rather than fake users. In the countries like Vietnam and Tajikistan, government structures are prompting cyber activists subordinated to them to use authentic profiles for spreading government propaganda, trolling of political opponents and mass reporting on undesirable information. Amid intensive account takedown campaign by social media companies, it is expected that a phenomenon of acting with authentic accounts will further intensify.

Cyber activists are using various methods to exert influence on social media users. The Report on Political Campaigning for 2019 outlines the following varieties of online activities:

- Spread of government or party propaganda;

- Attack against opposition and smear campaigns;

- Diverting attention from important to secondary issues;

- Aggravating political confrontation and polarization;

- Suppressing the activities by personal attacks and oppression.

To make it clear to users exactly who stands behind this or that advertisement or information campaign, Facebook, for example, has launched discussions on the need of ensuring more transparency. In particular, the question is about transparency of the sources of political ads. It will become the tool to know which group is behind this or that activity, how much it spends and what particular messages it voices to win voters’ hearts. The need for disclosing non-transparent political ads was put on Facebook’s agenda after it emerged that an estimated 10 million people in the U.S. saw the ads by Russian-backed Facebook pages. As a continuation of this process, Twitter has made the policy decision to off-board advertising from all accounts owned by Russia’s propagandistic channels, Russia Today (RT) and Sputnik.

Georgia has not been targeted by the above mentioned reports so far, but it is clear for the observers of local social and political developments that what the reports are talking about is familiar to us. We know for sure that the following tools are used to form public opinion and gain support in Georgia:

- Facebook pages, trying to spread pro-government narrative.

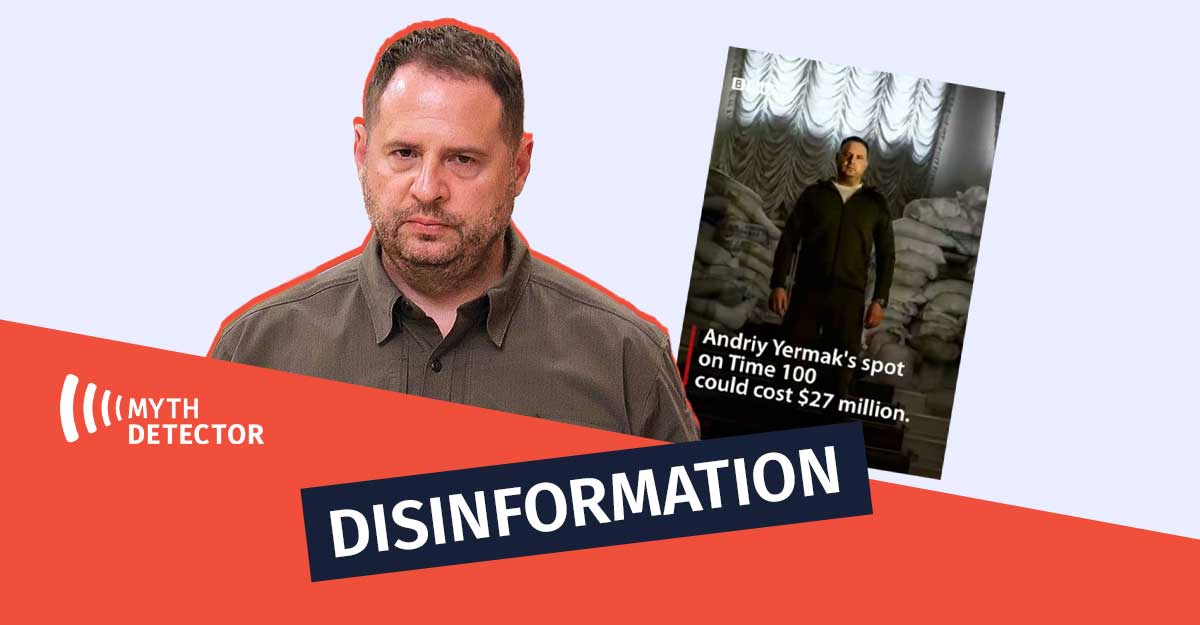

- Trolls and bots, acting in an organized manner against critical opinions, activists, government’s opponents, independent media and opposition forces.

- Fake news inciting homophobic and xenophobic sentiments in online information channels and anti-migrant statements by politicians.

- Use of hate speech by government officials, insulting statements against socially active persons and attacks against opponents.

- Russian-affiliated organizations promoting their interests with Neo-Nazi rhetoric.

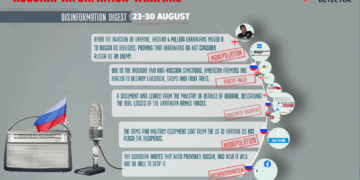

- Russian propagandistic media outlet Sputnik, operating in Georgian on the Tbilisi-controlled territory, as well as in Abkhazia and Tskhinvali region.

- Pro-Russian parties and media organizations belonging to them.

These tools are mainly used for inciting anti-Western sentiments, spreading pro-Kremlin narrative or marginalizing critical opinions and in some cases, they arouse doubts of being encouraged or supported by the government.

In this situation, when the signs of government crisis are observed in the country and the parliamentary elections are looming in 2020, it is difficult to find clear answers to a number of questions, among them:

- Who encourages hate groups?

- How to combat disinformation so that not to harm freedom of speech and expression?

- How much will the narrative of Facebook groups producing and spreading trash content affect the results of elections?

- How much and whose money is spent on spreading fake news and content inciting polarization?

- Should the spread of pro-government narrative by various, non-governmental and unofficial pages be considered illegal donations?

Various countries choose various ways to answer these questions. For example, French President Emmanuel Macron banned two Russian propagandistic media outlets – Russia Today and Sputnik – from participating in the events planned as part of his presidential campaign.

The Center against Terrorism and Hybrid Threats is functioning in the Czech Republic. It was one of the recommendations that stemmed from the preliminary conclusions of the National Security Audit, which identified various types of hybrid threats, including foreign disinformation campaigns.

In Germany, online platforms have an obligation to remove within 24 hours of publication “manifestly unlawful” content, such as hate speech, defamation and encouragement of violence. Otherwise, under the Network Enforcement Act adopted by the Bundestag, the networks may be fined.

The European Commission has adopted the Code of Conduct on Countering Illegal Hate Speech Online and the European Union has created the East Strategic Communications Task Force; in addition, the European Parliament is working on the issues of regulating disinformation with artificial intelligence.

In turn, Facebook is working to ensure more protection and transparency, promising candidates more protection ahead of the U.S. presidential elections in 2020 and Internet users – less unverified information. The above listed separate initiatives are unlikely to eradicate the spread of fake news online or neutralize the sentiments of hate groups; it, however, shows the readiness of various countries and institutions to face up to the problem, which poses a threat not only to separate groups, but to the democracies and liberal agenda as well.

Summarizing the activities of the thematic inquiry group on disinformation and propaganda, MP Nino Goguadze, keynote speaker, talked about necessary steps to reduce Russian disinformation and propaganda and focused on bad behavior by some opposition lawmakers. According to the initial plan, the thematic inquiry group should have developed a conclusion and recommendations in May 2019 and then in November 2019. However, no such document has been prepared so far. So, the only parliamentary group, which should have developed the recommendations for various governmental branches on how to counter informational influence of a foreign country, proved ineffective. Collection of information on disinformation and propaganda from various non-governmental organizations, involving all the challenges faced by the country, can be considered the most valuable work done by this group. Such futile work by the parliamentary group is a certain message to the public and reflects the government’s attitude and readiness for combating information threats.

Experts have different answers to the question about what is the solution, especially when the behavior of government officials resembles the behavior of disinformation promoters and the government uses trolls for its own purposes.

was können wir machen, wenn selbst die regierung benutzt die trollen für desinformation @luca @annegretbendiek @raeuberhose @alexkaterdemos @tavisupleba

— Nastasia Arabuli (@nastasiaarabuli) November 25, 2019

Silvia Stöber, freelance journalist, sees the solution in strong and independent media; Luca Hammer, social media analyst, supposes that adoption of relevant laws and constant disclosure of fake news that is an endless process may be a correct combat strategy; Karolin Schwarz, who created Hoaxmap, a platform to counter fake news, considers laws less reliable and supposes that such resources and journalists trained in disclosing fake news give important results in combating disinformation.

Correspondent of Radio Free Europe/Radio Liberty Especially for Myth Detector

The article is published within the framework of the project #FIGHTFAKE, which is implemented by MDF in cooperation with its partner organisation Deutsche Gesellschaft e.V.