Using videos out of their context to spread fake news is nothing new. However, the latest concern when it comes to disinformation are so-called ‘deep fakes,’ manipulated videos created with artificial intelligence.

Deepfakes can make it look like somebody did or said something he or she actually didn’t: There’s Obama insulting President Trump (“President Trump is a total and complete dip-****.”) or Mark Zuckerberg enthusing about the power he gets from stolen data. Both were created with a lip-sync technology matching an impersonator’s video or voice with an existing clip of either Obama or Zuckerberg. The result looks surprisingly real.

A few years back only Hollywood studios and large visual effects companies were the ones able to successfully manipulate video at their will however investing millions of dollars in the process. Soon enough nearly anyone will have the technology to digitally alter videos. Advances in deep learning technology have made it easier to create fake videos that look very realistic and the code by an anonymous source is openly available. Just imagine if someone created a fake video of a world leader declaring war. Researchers from the Universities of Berkeley and Southern California are warning about deepfake technology: “These so called deep fakes pose a significant threat to our democracy, national security, and society.” Experts are especially concerned about the possible effects of deepfakes regarding the upcoming 2020 elections in the US.

So far the most prominent deepfakes circulating online were made for entertainment, like Nicolas Cage appearing in every movie imaginable (https://www.youtube.com/watch?v=_OqMkZNHWPo) by inserting his face into a variety of clips. The algorithms behind it are trained with large amounts of data, like images or video clips. The more and diverse regarding lighting conditions and expressions they are, the better.

A recent study by the company ‘Deeptrace’ found that almost 15,000 deepfake videos are circulating online, with 96 percent of them being pornographic. So one of the risks is that the technology could be used for ‘revenge porn’ by swapping a person’s face with one in an existing pornographic video making deepfakes a means of defamation and/or blackmail. Celebrities like Emma Watson or Scarlett Johansson have already been targeted by deepfake pornography.

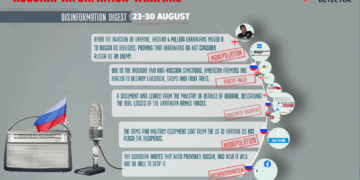

Photos and videos have been a subject of manipulation since their existence. Deepfakes could also be used to spread false information possibly making misinformation campaigns even more dangerous. What would that mean for countries that are already dealing with fake news on a daily basis? Take for example Georgia, a country with a polarized political system. Politicians here even see disinformation as a security issue: “Foreign countries are using propaganda to influence our internal policy,” said Nino Goguadze, Member of the Parliament and the Committee of Foreign Affairs. Disinformation is widespread – not only coming from Russia but also from within the country.

Recent investigation by Myth Detector, Georgian fact-checking web-platform, revealed that pro-government blogger Giorgia Agapashvili (გიორგი აღაპიშვილის ბლოგი) being a vocal against political opponent of Georgian Dream ruling party, turned to be AI generated photo instead of a real person thus indicating problem of domestic homegrown propaganda.

So would deepfakes really mean more dangerous disinformation campaigns in Georgia? “Here it is easy to make cheap propaganda, so there is no need for deepfakes,” said Sopho Gelava, researcher and editor at the Media Development Foundation. But the problem is that certain narratives are repeated over and over again. Also not everybody might question the news they find on the internet.

According to Tamar Kintsurashvili, Executive Director of the Georgian NGO Media Development Foundation, the main strategy behind disinformation in Georgia is fearmongering. Some examples include war or losing territorial claims as a result of joining NATO.

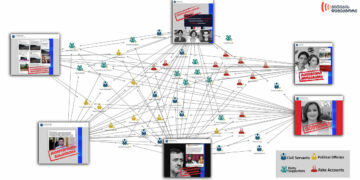

But the way fake news are designed is often not very sophisticated. Fake online media outlets are created by copying Western media domain names like the “Guardian’s” just leaving out the dot over the i or changing the “.com” to a “.ge”. Images are simply put in a different, misleading context to tell a story totally different from the original one. Or satirical and humoristic articles from English-language media are featured as real stories like “In America, a museum employee was arrested for raping wax figures of Obama and others” or “Nanny, Under Strong Influence of Drugs, Decided to Eat a 3-Month-Old Infant”. The strategy behind it is to portray bestiality and necrophilia as common practice in Western countries in order to convey the narrative that closer ties with countries like the US would pose a threat to Georgian values and identity.

According to the National Democratic Institute (NDI) “many of the myths and messages of well-tracked disinformation and anti-Western propaganda campaigns are reflected in people’s responses” in opinion polls1. The example of Georgia shows that the problem of disinformation is not how visually sophisticated it can look like, but rather its effectiveness. There is no need for AI and a perfectly manipulated video to disinform and spread fake news. In the end there might always be people believing false information even if it is not coming from a perfectly manipulated video created with the latest deepfake technology. A big part of the problem is that fake news are often multiplied via social networks like Facebook.

Especially journalists should be aware of the fact that perfect video manipulations will soon be accessible to anyone even without being able to code. Creating awareness through media literacy programs is one important step in educating people. But the public shouldn’t panic that every video we see is fake. The real threat of deepfakes may not be the deepfake videos themselves but how the population could end up doubting their own reality.

Reporter at Deutsche Welle especially for Myth Detector

The article is published within the framework of the project #FIGHTFAKE, which is implemented by MDF in cooperation with its partner organisation Deutsche Gesellschaft e.V.

____________________________

1National Democratic Institute (NDI): Public Opinion Research. Foreign Policy, Security and Disinformation in Georgia. Second Edition March 2018.